Dark Data, Legacy Dreams and Modern Reality: From 2012 FCI/DAC Nightmares to 2026 AI-Powered Data Intelligence

Disclaimer: This post reflects my personal opinion as an independent cybersecurity expert. How expert it really is — you decide, dear reader.

Reading yet another reminder that 85 % of organisational data is still dark data instantly transported me back 10–15 years.

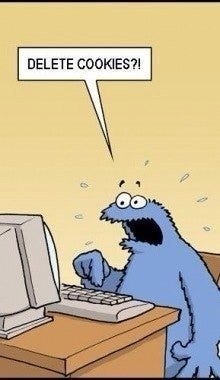

I remembered a product presentation by my future boss that ended with the classic line: “Come to the dark side — we have cookies.” At that time the world looked binary to me: clear light and darkness, no fifty shades of grey. I was hooked on security systems that could detect both external hackers and internal threats — insiders, corrupt employees, or those who looked loyal but kept a “fig in the pocket.”

That passion led me to two favourite technologies: IPS/NTA for network visibility and DLP for data leakage prevention.

The core ideas were solid even back then. The problem was execution. Everything required massive manual tuning because we didn’t have AI assistants that could simplify these tasks by orders of magnitude. Classification, policy creation, maintenance — it all demanded heavy upfront consulting. Many projects started with enthusiasm and died in the details.

Today the fundamental principles haven’t changed, but the tools have. As smart people say: knowledge of a few principles frees you from knowing many facts.

Modern DSPM platforms (with heavy use of LLMs and contextual graphs) finally make the old dream viable: automatic discovery and classification across the entire estate — cloud, on-prem, mainframe, SaaS — with minimal manual effort, real-time risk scoring, and automated orchestration into existing DLP, SIEM, and IAM tools.

The fairy tale we told ourselves in 2012 has become reality. What used to be blocked by the sheer volume of manual work is now achievable in weeks, not months.

My Top Practical Tips for Hunting Violators

External threats (network / attacker perspective):

Clearly separate network-based and agent-based approaches. Mirror traffic at minimal chokepoints — ideally the core switch. Agent-based monitoring eats resources fast (cloud APIs are a separate conversation).

Protocol support should be broad, but real value comes from metadata analysis speed and depth. Real-time blocking of sophisticated attacks is still mostly a fairy tale. Focus on understanding collected metadata to trace and remediate.

Track events religiously. Anomaly detection alone doesn’t guarantee 100 % coverage, but it will definitely help you spot the elephant in the china shop.

Maintain a living network map and asset inventory. This isn’t just for nice management reports — it’s your daily tool for vulnerability hunting and context.

Internal threats (data leakage / insider perspective):

To truly understand data flows inside the organisation, start building and maintaining a connectivity graph.

Keyword analysis remains the solid foundation for leak hunting; everything else (ML, context, etc.) is a powerful addition.

Remember: DLP is not a product — it’s a triangle of people, processes, and technology. I like to illustrate it as “dark matter”: it absorbs everything and should release nothing. The “quasars” you see are exactly the leaks.

Monitoring without immediate prohibition is often the most effective long-term measure. Prevention and sanctions work best as exceptions, not the default.

Universal rule that applies to both hunting:

Ideas (and insights) are like fish. If you want to catch little fish, you can stay in the shallow water. But if you want to catch the big fish, you’ve got to go deeper. Down deep, the fish are more powerful and more pure. They’re huge and abstract. And they’re very beautiful. — David Lynch

In threat hunting this means: don’t stop at surface-level alerts and obvious IOCs. Dive deeper into context, relationships, business impact, and subtle behavioural patterns. The real “big fish” — sophisticated insiders, advanced persistent threats, or critical dark data exposures — hide in the depths. Modern tools with AI and multidimensional graphs finally let us go there without drowning in manual effort.

The approaches we loved 10–15 years ago are still relevant. The difference now is that AI and modern data intelligence layers remove the biggest historical blocker — endless manual labour.

If this resonated or gave you a useful idea, you can BUY ME A COFFEE.

---

© 2026 Michal Domalewski / Naked CyberSec. All rights reserved.